Fix Mike Hopefully

Replit Agent built a working app in one hour. I spent the next nine days fixing it.

The idea

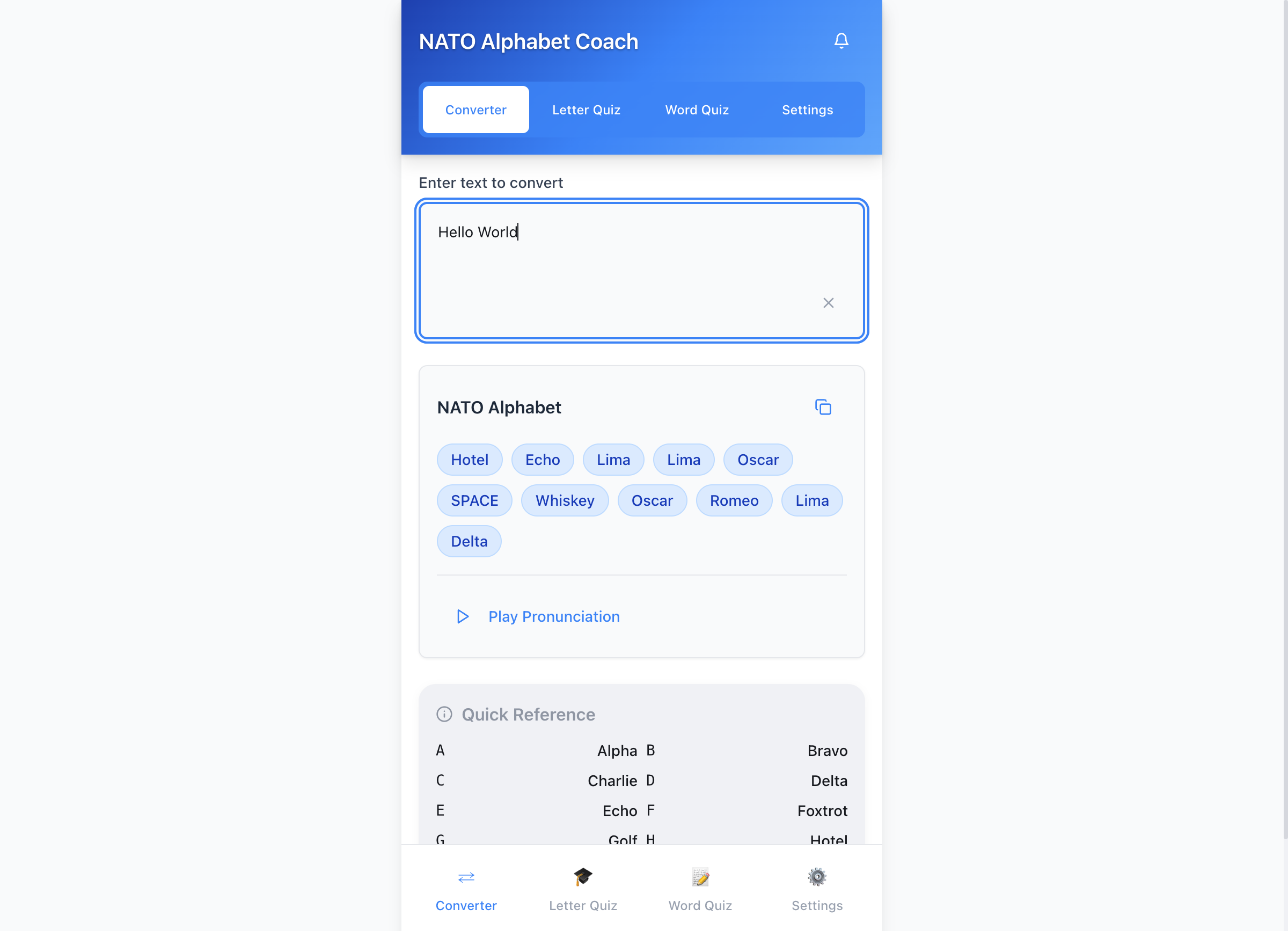

The NATO phonetic alphabet. Alpha, Bravo, Charlie. You think you know it until someone asks you to spell “Zurich” over a radio and your brain goes blank at Z. I wanted a small drill app with spaced repetition, voice input, multiple languages, themes, a converter. Not a startup. Just a useful tool. You can try it yourself. I opened Replit, described what I wanted, and let the Agent build.

The magic hour

Replit Agent took the full brief and put the entire application together in a single session. 77 files. North of 16,000 lines. React, TypeScript, Tailwind, shadcn components. Everything I asked for, wired up and running. First deploy: same day.

The most impressive piece was the spaced repetition algorithm:

export function selectLetterForReview(

userProgress: UserProgress[]

): string {

const now = new Date();

// Priority 1: letters past their review date

const needReview = userProgress.filter(

(p) => new Date(p.nextReview) <= now

);

if (needReview.length > 0) {

// Higher difficulty, older reviews first

needReview.sort((a, b) => {

const diff = b.difficulty - a.difficulty;

if (Math.abs(diff) > 0.5) return diff;

return new Date(a.lastReviewed).getTime()

- new Date(b.lastReviewed).getTime();

});

return needReview[0].letter;

}

// Priority 2: letters below 70% accuracy

const poor = userProgress.filter((p) => {

const total = p.correctCount + p.incorrectCount;

return total > 0 && p.correctCount / total < 0.7;

});

if (poor.length > 0) { /* worst accuracy first */ }

// Priority 3: unpracticed letters (random)

// Fallback: least-attempted letter

}

The Agent nailed this. Exponential backoff, a 70% accuracy threshold, difficulty that creeps up on success and nudges down on failure. Better than what I would have written by hand.

And then the first hour ended, and the real work began.

What I had to fix

The theme system spiral

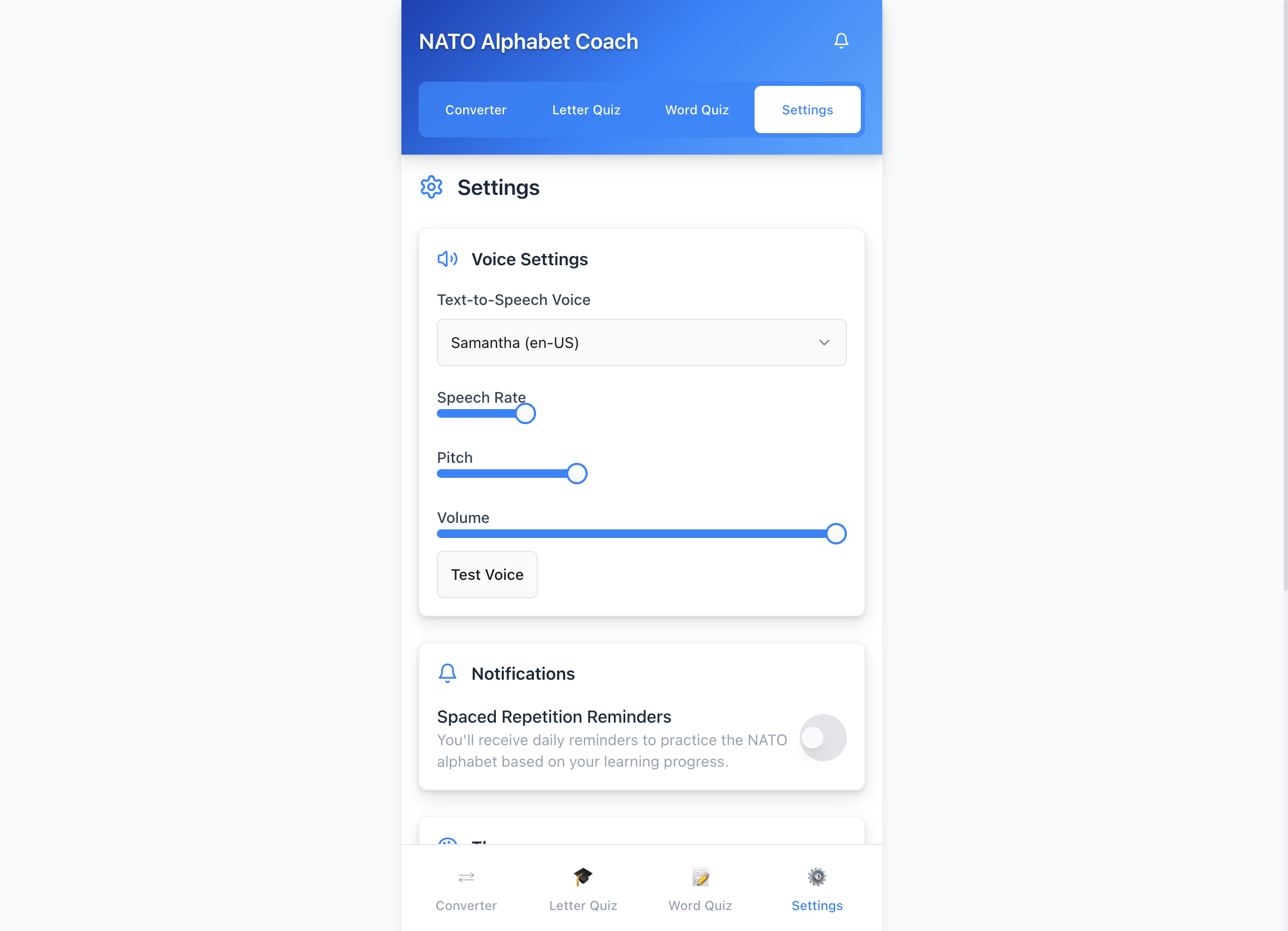

I asked for multiple themes, and the Agent delivered five. Getting them built was the easy part. Getting them to look right was something else entirely.

Text was unreadable in dark mode. Invisible against the rainbow gradient. Wrong contrast on the NATO theme. The Agent produced eight consecutive commits trying to fix visibility. Eight commits. Same problem. Each fix broke a different theme.

Then a Tailwind 4 migration attempt touched 44 files and broke everything. Rollback: 1,363 lines reverted. The Agent could build features. Sustaining them through change was a different skill entirely.

Speech recognition: “fix mike hopefully”

Voice input was part of the original brief. The Agent delivered it on day two. It broke immediately.

What started as a voice input button touching four files turned into a 19-file odyssey. Special cases for different phones and browsers. Different error handlers. Audio monitoring. Recognition event factories. Six major rewrites over six days. The commit messages tell the story:

“Try to fix speech recognition”

“attempting to fix microphone”

“fix mike hopefully”

“Try to fix speech on android”

“try to fix ios speech”

Read those again. Watch the capitalization disappear. Watch “fix” go from a confident verb to a plea.

// What voice input started as: 4 files, one hook

export function useSpeechRecognition(config) {

// ~80 lines of straightforward Web Speech API

}

// What it became: 19 files across two directories

import { getAndroidInfo, getBrowserInfo, getIOSInfo,

isSpeechRecognitionSupported } from './speech/platform-detection';

import { handleAndroidError,

handleIOSError } from './speech/platform-error-handlers';

import { createRecognitionHandlers } from './speech/recognition-handlers';

import { initializeSpeechRecognition,

testMicrophoneAccess } from './speech/speech-recognition-utils';

import { stopAudioMonitoring } from './speech/audio-monitoring';

Speech recognition support splits awkwardly across the browser landscape. Chromium gives you one workable path, Safari brings its own constraints, and Firefox turns into a fallback case rather than another implementation you can rely on. A developer would build one clean layer to hide those differences and keep the browser-specific logic behind a small number of focused adapters. The Agent didn’t find that boundary early. So each platform fix became its own file, its own handler, its own special case. Nineteen files where three would do.

Deployment friction

Replit generates development URLs that keep changing. Vite rejects unfamiliar hosts. Five config attempts later, the Agent landed on an allow-all host workaround for previews. The notification system called a backend API that didn’t exist and had to be removed entirely. A dozen commits, zero user-visible features. The kind of work that never makes it into a demo.

What surprised me

The RTL support. I asked for multiple languages including Arabic, and the Agent inferred that Arabic needs a right-to-left layout. The wrapper detects the language, flips the document direction, the UI mirrors correctly. Shipped clean on the first pass. When the problem is well-defined and the browser APIs cooperate, the Agent excels.

The git history became its own artifact. The Agent’s commits are verbose: “Enhance user experience with voice control, hints, and compact display.” The manual developer commits are terse: “fix mike hopefully.” You can see the exact moment the human had to take over.

The lesson

There is a trap in vibe coding, and it’s shaped like Pareto’s principle. The first 80% is dazzling. The remaining 20% is browser APIs that behave differently, deployment configuration, and visual consistency across states you didn’t test.

The Agent builds the house. You make the plumbing work.

There is no clean, universal speech-recognition solution you can copy across iOS Safari, Android Chrome, and desktop browsers. You can’t Google your way to a clean design and a solid implementation. You have to build one, through trial and error, on real devices, with real microphones. That kind of work is exactly what the 80/20 gap is made of. The NATO Alphabet Coach works. People use it to drill their Alphas and Bravos, and getting it to that point required a developer who could sit with broken microphone permissions across three environments until the code actually worked.

The role had shifted. I wasn’t building software anymore. I was finishing it.